[ad_1]

One of many extra thrilling functions of decentralized computing which have aroused a substantial quantity of curiosity prior to now 12 months is the idea of an incentivized decentralized on-line file storage system. Presently, in order for you your recordsdata or knowledge securely backed up “within the cloud”, you have got three selections – (1) add them to your personal servers, (2) use a centralized service like Google Drive or Dropbox or (3) use an current decentralized file system like Freenet. These approaches all have their very own faults; the primary has a excessive setup and upkeep price, the second depends on a single trusted celebration and sometimes entails heavy value markups, and the third is gradual and really restricted within the quantity of area that it permits every consumer as a result of it depends on customers to volunteer storage. Incentivized file storage protocols have the potential to supply a fourth approach, offering a a lot increased amount of storage and high quality of service by incentivizing actors to take part with out introducing centralization.

Quite a lot of platforms, together with StorJ, Maidsafe, to some extent Permacoin, and Filecoin, are trying to deal with this downside, and the issue appears easy within the sense that each one the instruments are both already there or en path to being constructed, and all we’d like is the implementation. Nevertheless, there may be one a part of the issue that’s significantly necessary: how will we correctly introduce redundancy? Redundancy is essential to safety; particularly in a decentralized community that will probably be extremely populated by newbie and informal customers, we completely can not depend on any single node to remain on-line. We may merely replicate the information, having just a few nodes every retailer a separate copy, however the query is: can we do higher? Because it seems, we completely can.

Merkle Timber and Problem-Response Protocols

Earlier than we get into the nitty gritty of redundancy, we are going to first cowl the better half: how will we create at the very least a primary system that can incentivize at the very least one celebration to carry onto a file? With out incentivization, the issue is simple; you merely add the file, look forward to different customers to obtain it, after which once you want it once more you may make a request querying for the file by hash. If we need to introduce incentivization, the issue turns into considerably more durable – however, within the grand scheme of issues, nonetheless not too exhausting.

Within the context of file storage, there are two sorts of actions that you could incentivize. The primary is the precise act of sending the file over to you once you request it. That is simple to do; the most effective technique is a straightforward tit-for-tat recreation the place the sender sends over 32 kilobytes, you ship over 0.0001 cash, the sender sends over one other 32 kilobytes, and many others. Be aware that for very massive recordsdata with out redundancy this technique is susceptible to extortion assaults – very often, 99.99% of a file is ineffective to you with out the final 0.01%, so the storer has the chance to extort you by asking for a really excessive payout for the final block. The cleverest repair to this downside is definitely to make the file itself redundant, utilizing a particular type of encoding to increase the file by, say, 11.11% in order that any 90% of this prolonged file can be utilized to recuperate the unique, after which hiding the precise redundancy proportion from the storer; nevertheless, because it seems we are going to focus on an algorithm similar to this for a distinct function later, so for now, merely settle for that this downside has been solved.

The second act that we are able to incentivize is the act of holding onto the file and storing it for the long run. This downside is considerably more durable – how will you show that you’re storing a file with out really transferring the entire thing? Thankfully, there’s a answer that isn’t too troublesome to implement, utilizing what has now hopefully established a well-recognized fame because the cryptoeconomist’s finest good friend: Merkle bushes.

Properly, Patricia Merkle could be higher in some circumstances, to be exact. Athough right here the plain outdated unique Merkle will do.

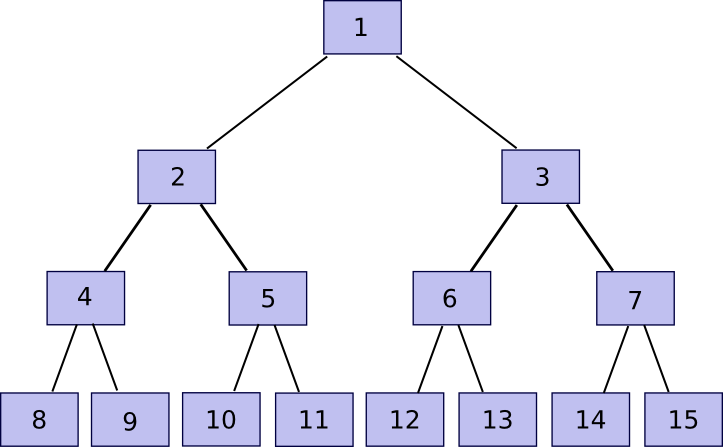

n = 2^okayfor some

okay(the padding step is avoidable, nevertheless it makes the algorithm easier to code and clarify). Then, we construct the tree. Rename the

nchunks that we obtained

chunk[n]to

chunk[2n-1], after which rebuild chunks

1to

n-1with the next rule:

chunk[i] = sha3([chunk[2*i], chunk[2*i+1]]). This allows you to calculate chunks

n/2to

n-1, then

n/4to

n/2 - 1, and so forth going up the tree till there may be one “root”,

chunk[1].

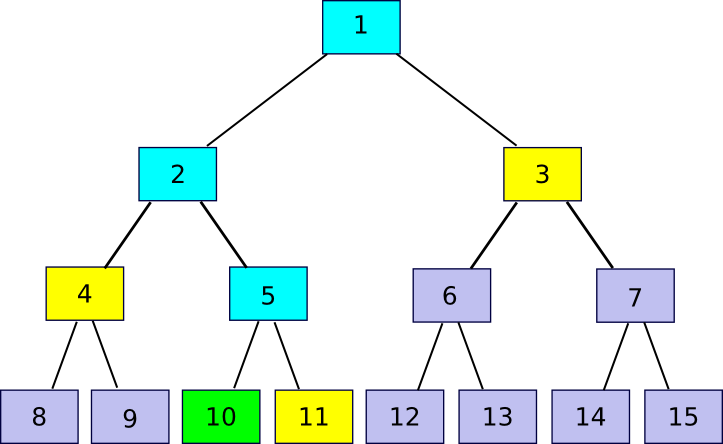

Now, word that in case you retailer solely the basis, and neglect about chunk[2] … chunk[2n-1], the entity storing these different chunks can show to you that they’ve any explicit chunk with just a few hundred bytes of information. The algorithm is comparatively easy. First, we outline a perform accomplice(n) which supplies n-1 if n is odd, in any other case n+1 – in brief, given a bit discover the chunk that it’s hashed along with with the intention to produce the father or mother chunk. Then, if you wish to show possession of chunk[k] with n <= okay <= 2n-1 (ie. any a part of the unique file), submit chunk[partner(k)], chunk[partner(k/2)] (division right here is assumed to spherical down, so eg. 11 / 2 = 5), chunk[partner(k/4)] and so forth all the way down to chunk[1], alongside the precise chunk[k]. Basically, we’re offering the whole “department” of the tree going up from that node all the best way to the basis. The verifier will then take chunk[k] and chunk[partner(k)] and use that to rebuild chunk[k/2], use that and chunk[partner(k/2)] to rebuild chunk[k/4] and so forth till the verifier will get to chunk[1], the basis of the tree. If the basis matches, then the proof is okay; in any other case it is not.

11 = accomplice(10)), 4 (

4 = accomplice(10/2)) and three (

3 = accomplice(10/4)). The verification course of entails beginning off with chunk 10, utilizing every accomplice chunk in flip to recompute first chunk 5, then chunk 2, then chunk 1, and seeing if chunk 1 matches the worth that the verifier had already saved as the basis of the file.

Be aware that the proof implicitly contains the index – typically you want to add the accomplice chunk on the best earlier than hashing and typically on the left, and if the index used to confirm the proof is totally different then the proof won’t match. Thus, if I ask for a proof of piece 422, and also you as an alternative present even a legitimate proof of piece 587, I’ll discover that one thing is mistaken. Additionally, there isn’t a approach to supply a proof with out possession of the whole related part of the Merkle tree; in case you attempt to move off faux knowledge, in some unspecified time in the future the hashes will mismatch and the ultimate root will probably be totally different.

Now, let’s go over the protocol. I assemble a Merkle tree out of the file as described above, and add this to some celebration. Then, each 12 hours, I choose a random quantity in [0, 2^k-1] and submit that quantity as a problem. If the storer replies again with a Merkle tree proof, then I confirm the proof and whether it is right ship 0.001 BTC (or ETH, or storjcoin, or no matter different token is used). If I obtain no proof or an invalid proof, then I don’t ship BTC. If the storer shops the whole file, they are going to succeed 100% of the time, in the event that they retailer 50% of the file they are going to succeed 50% of the time, and many others. If we need to make it all-or-nothing, then we are able to merely require the storer to unravel ten consecutive proofs with the intention to get a reward. The storer can nonetheless get away with storing 99%, however then we benefit from the identical redundant coding technique that I discussed above and can describe under to make 90% of the file enough in any case.

One concern that you’ll have at this level is privateness – in case you use a cryptographic protocol to let any node receives a commission for storing your file, would that not imply that your recordsdata are unfold across the web in order that anybody can doubtlessly entry them? Thankfully the reply to that is easy: encrypt the file earlier than sending it out. From this level on, we’ll assume that each one knowledge is encrypted, and ignore privateness as a result of the presence of encryption resolves that situation nearly fully (the “nearly” being that the dimensions of the file, and the instances at which you entry the file, are nonetheless public).

Trying to Decentralize

So now we’ve a protocol for paying folks to retailer your knowledge; the algorithm may even be made trust-free by placing it into an Ethereum contract, utilizing

block.prevhash as a supply of random knowledge to generate the challenges. Now let’s go to the subsequent step: determining the right way to decentralize the storage and add redundancy. The only approach to decentralize is straightforward replication: as an alternative of 1 node storing one copy of the file, we are able to have 5 nodes storing one copy every. Nevertheless, if we merely comply with the naive protocol above, we’ve an issue: one node can faux to be 5 nodes and accumulate a 5x return. A fast repair to that is to encrypt the file 5 instances, utilizing 5 totally different keys; this makes the 5 similar copies indistinguishable from 5 totally different recordsdata, so a storer will be unable to note that the 5 recordsdata are the identical and retailer them as soon as however declare a 5x reward.

However even right here we’ve two issues. First, there isn’t a approach to confirm that the 5 copies of the file are saved by 5 separate customers. If you wish to have your file backed up by a decentralized cloud, you might be paying for the service of decentralization; it makes the protocol have a lot much less utility if all 5 customers are literally storing all the things via Google and Amazon. That is really a tough downside; though encrypting the file 5 instances and pretending that you’re storing 5 totally different recordsdata will forestall a single actor from accumulating a 5x reward with 1x storage, it can not forestall an actor from accumulating a 5x reward with 5x storage, and economies of scale imply even that scenario will probably be fascinating from the standpoint of some storers. Second, there may be the difficulty that you’re taking a big overhead, and particularly taking the false-redundancy situation under consideration you might be actually not getting that a lot redundancy from it – for instance, if a single node has a 50% likelihood of being offline (fairly affordable if we’re speaking a few community of recordsdata being saved within the spare area on folks’s exhausting drives), then you have got a 3.125% likelihood at any level that the file will probably be inaccessible outright.

There may be one answer to the primary downside, though it’s imperfect and it is not clear if the advantages are price it. The concept is to make use of a mix of proof of stake and a protocol referred to as “proof of custody” – proof of simultaneous possession of a file and a personal key. If you wish to retailer your file, the concept is to randomly choose some variety of stakeholders in some forex, weighting the likelihood of choice by the variety of cash that they’ve. Implementing this in an Ethereum contract would possibly contain having members deposit ether within the contract (keep in mind, deposits are trust-free right here if the contract supplies a approach to withdraw) after which giving every account a likelihood proportional to its deposit. These stakeholders will then obtain the chance to retailer the file. Then, as an alternative of the easy Merkle tree examine described within the earlier part, the proof of custody protocol is used.

The proof of custody protocol has the profit that it’s non-outsourceable – there isn’t a approach to put the file onto a server with out giving the server entry to your non-public key on the similar time. Which means, at the very least in idea, customers will probably be a lot much less inclined to retailer massive portions of recordsdata on centralized “cloud” computing methods. In fact, the protocol accomplishes this at the price of a lot increased verification overhead, in order that leaves open the query: do we wish the verification overhead of proof of custody, or the storage overhead of getting additional redundant copies simply in case?

M of N

No matter whether or not proof of custody is a good suggestion, the subsequent step is to see if we are able to do some higher with redundancy than the naive replication paradigm. First, let’s analyze how good the naive replication paradigm is. Suppose that every node is obtainable 50% of the time, and you might be keen to take 4x overhead. In these circumstances, the prospect of failure is

0.5 ^ 4 = 0.0625 – a quite excessive worth in comparison with the “4 nines” (ie. 99.99% uptime) provided by centralized companies (some centralized companies supply 5 – 6 nines, however purely due to Talebian black swan considerations any guarantees over three nines can usually be thought-about bunk; as a result of decentralized networks don’t rely on the existence or actions of any particular firm or hopefully any particular software program bundle, nevertheless, decentralized methods arguably really can promise one thing like 4 nines legitimately). If we assume that almost all of the community will probably be quasi-professional miners, then we are able to scale back the unavailability proportion to one thing like 10%, during which case we really do get 4 nines, nevertheless it’s higher to imagine the extra pessimistic case.

What we thus want is a few type of M-of-N protocol, very similar to multisig for Bitcoin. So let’s describe our dream protocol first, and fear about whether or not it is possible later. Suppose that we’ve a file of 1 GB, and we need to “multisig” it right into a 20-of-60 setup. We break up the file up into 60 chunks, every 50 MB every (ie. 3 GB complete), such that any 20 of these chunks suffice to reconstruct the unique. That is information-theoretically optimum; you’ll be able to’t reconstruct a gigabyte out of lower than a gigabyte, however reconstructing a gigabyte out of a gigabyte is totally potential. If we’ve this sort of protocol, we are able to use it to separate every file up into 60 items, encrypt the 60 chunks individually to make them seem like unbiased recordsdata, and use an incentivized file storage protocol on each individually.

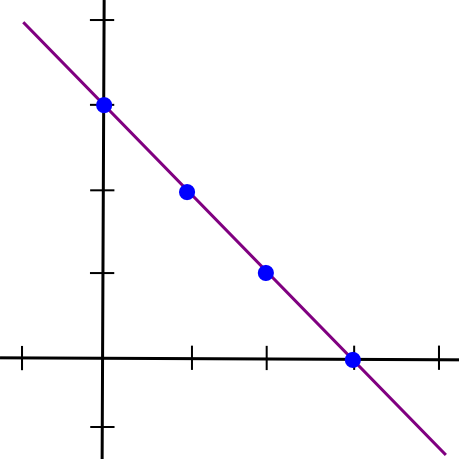

Now, right here comes the enjoyable half: such a protocol really exists. On this subsequent a part of the article, we’re going to describe a chunk of math that’s alternately referred to as both “secret sharing” or “erasure coding” relying on its utility; the algorithm used for each these names is principally the identical except one implementation element. To start out off, we are going to recall a easy perception: two factors make a line.

x = 1and the second half because the y coordinate of the road at

x = 2, draw the road, and take factors at

x = 3,

x = 4, and many others. Any two items can then be used to reconstruct the road, and from there derive the y coordinates at

x = 1and

x = 2 to get the file again.

Mathematically, there are two methods of doing this. The primary is a comparatively easy strategy involving a system of linear equations. Suppose that we file we need to break up up is the quantity “1321”. The left half is 13, the best half is 21, so the road joins (1, 13) and (2, 21). If we need to decide the slope and y-intercept of the road, we are able to simply clear up the system of linear equations:

Subtract the primary equation from the second, and also you get:

After which plug that into the primary equation, and get:

So we’ve our equation, y = 8 * x + 5. We will now generate new factors: (3, 29), (4, 37), and many others. And from any two of these factors we are able to recuperate the unique equation.

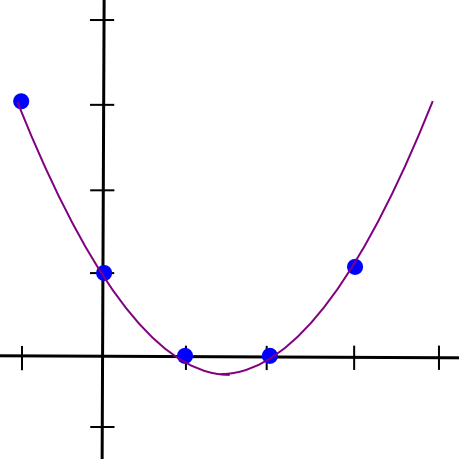

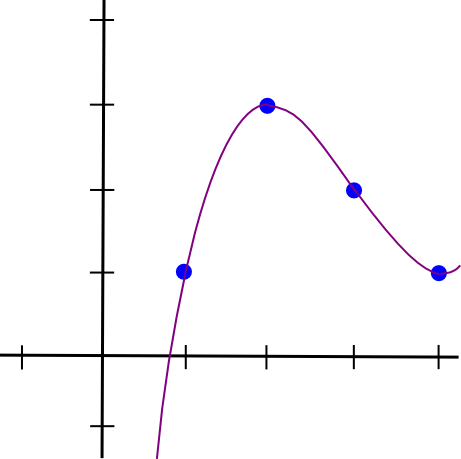

Now, let’s go one step additional, and generalize this into m-of-n. Because it seems, it is extra sophisticated however not too troublesome. We all know that two factors make a line. We additionally know that three factors make a parabola:

x = 1, 2, 3, and take additional factors on the parabola as further items. If we wish 4-of-n, we use a cubic polynomial as an alternative. Let’s undergo that latter case; we nonetheless hold our unique file, “1321”, however we’ll break up it up utilizing 4-of-7 as an alternative. Our 4 factors are

(1, 1),

(2, 3),

(3, 2),

(4, 1). So we’ve:

Eek! Properly, let’s, uh, begin subtracting. We’ll subtract equation 1 from equation 2, 2 from 3, and three from 4, to cut back 4 equations to 3, after which repeat that course of many times.

So a = 1/2. Now, we unravel the onion, and get:

So b = -9/2, after which:

So c = 12, after which:

So a = 0.5, b = -4.5, c = 12, d = -7. Here is the stunning polynomial visualized:

I created a Python utility that will help you do that (this utility additionally does different extra superior stuff, however we’ll get into that later); you’ll be able to obtain it here. In the event you needed to unravel the equations shortly, you’ll simply kind in:

> import share > share.sys_solve([[1.0, 1.0, 1.0, 1.0, -1.0], [8.0, 4.0, 2.0, 1.0, -3.0], [27.0, 9.0, 3.0, 1.0, -2.0], [64.0, 16.0, 4.0, 1.0, -1.0]]) [0.5, -4.5, 12.0, -7.0]

Be aware that placing the values in as floating level is important; in case you use integers Python’s integer division will screw issues up.

Now, we’ll cowl the better approach to do it, Lagrange interpolation. The concept right here may be very intelligent: we give you a cubic polynomial whose worth is 1 at x = 1 and 0 at x = 2, 3, 4, and do the identical for each different x coordinate. Then, we multiply and add the polynomials collectively; for instance, to match (1, 3, 2, 1) we merely take 1x the polynomial that passes via (1, 0, 0, 0), 3x the polynomial via (0, 1, 0, 0), 2x the polynomial via (0, 0, 1, 0) and 1x the polynomial via (0, 0, 0, 1) after which add these polynomials collectively to get the polynomal via (1, 3, 2, 1) (word that I mentioned the polynomial passing via (1, 3, 2, 1); the trick works as a result of 4 factors outline a cubic polynomial uniquely). This may not appear simpler, as a result of the one approach we’ve of becoming polynomials to factors to far is the cumbersome process above, however luckily, we even have an express development for it:

At x = 1, discover that the highest and backside are similar, so the worth is 1. At x = 2, 3, 4, nevertheless, one of many phrases on the highest is zero, so the worth is zero. Multiplying up the polynomials takes quadratic time (ie. ~16 steps for 4 equations), whereas our earlier process took cubic time (ie. ~64 steps for 4 equations), so it is a substantial enchancment particularly as soon as we begin speaking about bigger splits like 20-of-60. The python utility helps this algorithm too:

> import share > share.lagrange_interp([1.0, 3.0, 2.0, 1.0], [1.0, 2.0, 3.0, 4.0]) [-7.0, 12.000000000000002, -4.5, 0.4999999999999999]

The primary argument is the y coordinates, the second is the x coordinates. Be aware the alternative order right here; the code within the python module places the lower-order coefficients of the polynomial first. And eventually, let’s get our further shares:

> share.eval_poly_at([-7.0, 12.0, -4.5, 0.5], 5) 3.0 > share.eval_poly_at([-7.0, 12.0, -4.5, 0.5], 6) 11.0 > share.eval_poly_at([-7.0, 12.0, -4.5, 0.5], 7) 28.0

So right here instantly we are able to see two issues. First, it seems to be like computerized floating level numbers aren’t infinitely exact in any case; the 12 become 12.000000000000002. Second, the chunks begin getting massive as we transfer additional out; at x = 10, it goes as much as 163. That is considerably breaking the promise that the quantity of information you want to recuperate the file is similar measurement as the unique file; if we lose x = 1, 2, 3, 4 then you definitely want 8 digits to get the unique values again and never 4. These are each severe points, and ones that we’ll resolve with some extra mathematical cleverness later, however we’ll go away them apart for now.

Even with these points remaining, we’ve principally achieved victory, so let’s calculate our spoils. If we use a 20-of-60 break up, and every node is on-line 50% of the time, then we are able to use combinatorics – particularly, the binomial distribution formula – to compute the likelihood that our knowledge is okay. First, to set issues up:

> def fac(n): return 1 if n==0 else n * fac(n-1) > def select(n,okay): return fac(n) / fac(okay) / fac(n-k) > def prob(n,okay,p): return select(n,okay) * p ** okay * (1-p) ** (n-k)

The final components computes the likelihood that precisely okay servers out of n will probably be on-line if every particular person server has a likelihood p of being on-line. Now, we’ll do:

> sum([prob(60, k, 0.5) for k in range(0, 20)]) 0.0031088013296633353

99.7% uptime with solely 3x redundancy – a superb step up from the 87.5% uptime that 3x redundancy would have given us had easy replication been the one instrument in our toolkit. If we crank the redundancy as much as 4x, then we get six nines, and we are able to cease there as a result of the likelihood both Ethereum or the whole web will crash outright is larger than 0.0001% anyway (actually, you are more likely to die tomorrow). Oh, and if we assume every machine has 90% uptime (ie. hobbyist “farmers”), then with a 1.5x-redundant 20-of-30 protocol we get a fully overkill twelve nines. Fame methods can be utilized to maintain observe of how usually every node is on-line.

Coping with Errors

We’ll spend the remainder of this text discussing three extensions to this scheme. The primary is a priority that you’ll have passed over studying the above description, however one which is nonetheless necessary: what occurs if some node tries to actively cheat? The algorithm above can recuperate the unique knowledge of a 20-of-60 break up from any 20 items, however what if one of many knowledge suppliers is evil and tries to supply faux knowledge to screw with the algorithm. The assault vector is a quite compelling one:

> share.lagrange_interp([1.0, 3.0, 2.0, 5.0], [1.0, 2.0, 3.0, 4.0]) [-11.0, 19.333333333333336, -8.5, 1.1666666666666665]

Taking the 4 factors of the above polynomial, however altering the final worth to five, offers a totally totally different outcome. There are two methods of coping with this downside. One is the apparent approach, and the opposite is the mathematically intelligent approach. The plain approach is apparent: when splitting a file, hold the hash of every chunk, and evaluate the chunk towards the hash when receiving it. Chunks that don’t match their hashes are to be discarded.

The intelligent approach is considerably extra intelligent; it entails some spooky not-quite-moon-math referred to as the Berlekamp-Welch algorithm. The concept is that as an alternative of becoming only one polynomial, P, we think about into existence two polynomials, Q and E, such that Q(x) = P(x) * E(x), and attempt to clear up for each Q and E on the similar time. Then, we compute P = Q / E. The concept is that if the equation holds true, then for all x both P(x) = Q(x) / E(x) or E(x) = 0; therefore, apart from computing the unique polynomial we magically isolate what the errors are. I will not go into an instance right here; the Wikipedia article has a wonderfully first rate one, and you’ll attempt it your self with:

> map(lambda x: share.eval_poly_at([-7.0, 12.0, -4.5, 0.5], x), [1, 2, 3, 4, 5, 6]) [1.0, 3.0, 2.0, 1.0, 3.0, 11.0] > share.berlekamp_welch_attempt([1.0, 3.0, 18018.0, 1.0, 3.0, 11.0], [1, 2, 3, 4, 5, 6], 3) [-7.0, 12.0, -4.5, 0.5] > share.berlekamp_welch_attempt([1.0, 3.0, 2.0, 1.0, 3.0, 0.0], [1, 2, 3, 4, 5, 6], 3) [-7.0, 12.0, -4.5, 0.5]

Now, as I discussed, this mathematical trickery is just not actually all that wanted for file storage; the easier strategy of storing hashes and discarding any piece that doesn’t match the recorded hash works simply superb. However it’s by the way fairly helpful for an additional utility: self-healing Bitcoin addresses. Bitcoin has a base58check encoding algorithm, which can be utilized to detect when a Bitcoin handle has been mistyped and returns an error so you don’t unintentionally ship 1000’s of {dollars} into the abyss. Nevertheless, utilizing what we all know, we are able to really do higher and make an algorithm which not solely detects mistypes but additionally really corrects the errors on the fly. We do not use any type of intelligent handle encoding for Ethereum as a result of we desire to encourage use of title registry-based options, but when an handle encoding scheme was demanded one thing like this might be used.

Finite Fields

Now, we get again to the second downside: as soon as our x coordinates get slightly increased, the y coordinates begin taking pictures off in a short time towards infinity. To unravel this, what we’re going to do is nothing in need of fully redefining the foundations of arithmetic as we all know them. Particularly, let’s redefine our arithmetic operations as:

a + b := (a + b) % 11 a - b := (a - b) % 11 a * b := (a * b) % 11 a / b := (a * b ** 9) % 11

That “%” signal there may be “modulo”, ie. “take the rest of dividing that vaue by 11”, so we’ve

7 + 5 = 1,

6 * 6 = 3(and its corollary

3 / 6 = 6), and many others. We at the moment are solely allowed to cope with the numbers 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10. The stunning factor is that, whilst we do that, the entire guidelines about conventional arithmetic nonetheless maintain with our new arithmetic;

(a * b) * c = a * (b * c),

(a + b) * c = (a * c) + (b * c),

a / b * b = aif

b != 0,

(a^2 - b^2) = (a - b)*(a + b), and many others. Thus, we are able to merely take the algebra behind our polynomial encoding that we used above, and transplant it over into the brand new system. Although the instinct of a polynomial curve is totally borked – we’re now coping with summary mathematical objects and never something resembling precise factors on a airplane – as a result of our new algebra is self-consistent, the formulation nonetheless work, and that is what counts.

> e = share.mkModuloClass(11) > P = share.lagrange_interp(map(e, [1, 3, 2, 1]), map(e, [1, 2, 3, 4])) > P [4, 1, 1, 6] > map(lambda x: share.eval_poly_at(map(e, P), e(x)), vary(1, 9)) [1, 3, 2, 1, 3, 0, 6, 2] > share.berlekamp_welch_attempt(map(e, [1, 9, 9, 1, 3, 0, 6, 2]), map(e, [1, 2, 3, 4, 5, 6, 7, 8]), 3) [4, 1, 1, 6]

The “

map(e, [v1, v2, v3])” is used to transform extraordinary integers into components on this new area; the software program library contains an implementation of our loopy modulo 11 numbers that interfaces with arithmetic operators seamlessly so we are able to merely swap them in (eg.

print e(6) * e(6)returns

3). You may see that all the things nonetheless works – besides that now, as a result of our new definitions of addition, subtraction, multiplication and division at all times return integers in

[0 ... 10] we by no means want to fret about both floating level imprecision or the numbers increasing because the x coordinate will get too excessive.

Now, in actuality these comparatively easy modulo finite fields aren’t what are normally utilized in error-correcting codes; the commonly most popular development is one thing referred to as a Galois field (technically, any area with a finite variety of components is a Galois area, however typically the time period is used particularly to check with polynomial-based fields as we are going to describe right here). The concept is that the weather within the area at the moment are polynomials, the place the coefficients are themselves values within the area of integers modulo 2 (ie. a + b := (a + b) % 2, and many others). Including and subtracting work as usually, however multiplying is itself modulo a polynomial, particularly x^8 + x^4 + x^3 + x + 1. This quite sophisticated multilayered development lets us have a area with precisely 256 components, so we are able to conveniently retailer each aspect in a single byte and each byte as one aspect. If we need to work on chunks of many bytes at a time, we merely apply the scheme in parallel (ie. if every chunk is 1024 bytes, decide 10 polynomials, one for every byte, lengthen them individually, and mix the values at every x coordinate to get the chunk there).

However it’s not necessary to know the precise workings of this; the salient level is that we are able to redefine +, –, * and / in such a approach that they’re nonetheless totally self-consistent however at all times take and output bytes.

Going Multidimensional: The Self-Therapeutic Dice

Now, we’re utilizing finite fields, and we are able to cope with errors, however one situation nonetheless stays: what occurs when nodes do go down? At any cut-off date, you’ll be able to depend on 50% of the nodes storing your file staying on-line, however what you can’t depend on is similar nodes staying on-line endlessly – ultimately, just a few nodes are going to drop out, then just a few extra, then just a few extra, till ultimately there aren’t sufficient of the unique nodes left on-line. How will we combat this gradual attrition? One technique is that you would merely watch the contracts which might be rewarding every particular person file storage occasion, seeing when some cease paying out rewards, after which re-upload the file. Nevertheless, there’s a downside: with the intention to re-upload the file, you want to reconstruct the file in its entirety, a doubtlessly troublesome process for the multi-gigabyte films that at the moment are wanted to fulfill folks’s seemingly insatiable needs for multi-thousand pixel decision. Moreover, ideally we wish the community to have the ability to heal itself with out requiring energetic involvement from a centralized supply, even the proprietor of the recordsdata.

Thankfully, such an algorithm exists, and all we have to accomplish it’s a intelligent extension of the error correcting codes that we described above. The elemental concept that we are able to depend on is the truth that polynomial error correcting codes are “linear”, a mathematical time period which principally signifies that it interoperates properly with multiplication and addition. For instance, think about:

> share.lagrange_interp([1.0, 3.0, 2.0, 1.0], [1.0, 2.0, 3.0, 4.0]) [-7.0, 12.000000000000002, -4.5, 0.4999999999999999] > share.lagrange_interp([10.0, 5.0, 5.0, 10.0], [1.0, 2.0, 3.0, 4.0]) [20.0, -12.5, 2.5, 0.0] > share.lagrange_interp([11.0, 8.0, 7.0, 11.0], [1.0, 2.0, 3.0, 4.0]) [13.0, -0.5, -2.0, 0.5000000000000002] > share.lagrange_interp([22.0, 16.0, 14.0, 22.0], [1.0, 2.0, 3.0, 4.0]) [26.0, -1.0, -4.0, 1.0000000000000004]

See how the enter to the third interpolation is the sum of the inputs to the primary two, and the output finally ends up being the sum of the primary two outputs, after which after we double the enter it additionally doubles the output. So what’s the advantage of this? Properly, here is the intelligent trick. Erasure cording is itself a linear components; it depends solely on multiplication and addition. Therefore, we’re going to apply erasure coding to itself. So how are we going to do that? Right here is one potential technique.

First, we take our 4-digit “file” and put it right into a 2×2 grid.

Then, we use the identical polynomial interpolation and extension course of as above to increase the file alongside each the x and y axes:

1 3 5 7 2 1 0 10 3 10 4 8

After which we apply the method once more to get the remaining 4 squares:

1 3 5 7 2 1 0 10 3 10 6 2 4 8 1 5

Be aware that it would not matter if we get the final 4 squares by increasing horizontally and vertically; as a result of secret sharing is linear it’s commutative with itself, so that you get the very same reply both approach. Now, suppose we lose a quantity within the center, say, 6. Properly, we are able to do a restore vertically:

> share.restore([5, 0, None, 1], e) [5, 0, 6, 1]

Or horizontally:

> share.restore([3, 10, None, 2], e) [3, 10, 6, 2]

And tada, we get 6 in each circumstances. That is the stunning factor: the polynomials work equally properly on each the x or the y axis. Therefore, if we take these 16 items from the grid, and break up them up amongst 16 nodes, and one of many nodes disappears, then nodes alongside both axis can come collectively and reconstruct the information that was held by that specific node and begin claiming the reward for storing that knowledge. Ideally, we are able to even lengthen this course of past 2 dimensions, producing a three-dimensional dice, a four-dimensional hypercube or extra – the acquire of utilizing extra dimensions is ease of reconstruction, and the associated fee is a decrease diploma of redundancy. Thus, what we’ve is an information-theoretic equal of one thing that sounds prefer it got here straight out of science-fiction: a extremely redundant, interlinking, modular self-healing dice, that may shortly regionally detect and repair its personal errors even when massive sections of the dice had been to be broken, co-opted or destroyed.

“The dice can nonetheless perform even when as much as 78% of it had been to be destroyed…”

So, let’s put all of it collectively. You might have a ten GB file, and also you need to break up it up throughout the community. First, you encrypt the file, and then you definitely break up the file into, as an example, 125 chunks. You prepare these chunks right into a three-dimensional 5x5x5 dice, work out the polynomial alongside every axis, and “lengthen” each in order that on the finish you have got a 7x7x7 dice. You then search for 343 nodes keen to retailer each bit of information, and inform every node solely the id of the opposite nodes which might be alongside the identical axis (we need to make an effort to keep away from a single node gathering collectively a whole line, sq. or dice and storing it and calculating any redundant chunks as wanted in real-time, getting the reward for storing all of the chunks of the file with out really offering any redundancy.

As a way to really retrieve the file, you’ll ship out a request for the entire chunks, then see which of the items coming in have the very best bandwidth. Chances are you’ll use the pay-per-chunk protocol to pay for the sending of the information; extortion is just not a difficulty as a result of you have got such excessive redundancy so nobody has the monopoly energy to disclaim you the file. As quickly because the minimal variety of items arrive, you’ll do the maths to decrypt the items and reconstitute the file regionally. Maybe, if the encoding is per-byte, you might even have the ability to apply this to a Youtube-like streaming implementation, reconstituting one byte at a time.

In some sense, there may be an unavoidable tradeoff between self-healing and vulnerability to this sort of faux redundancy: if components of the community can come collectively and recuperate a lacking piece to supply redundancy, then a malicious massive actor within the community can recuperate a lacking piece on the fly to supply and cost for faux redundancy. Maybe some scheme involving including one other layer of encryption on each bit, hiding the encryption keys and the addresses of the storers of the person items behind one more erasure code, and incentivizing the revelation course of solely at some explicit instances would possibly type an optimum stability.

Secret Sharing

Originally of the article, I discussed one other title for the idea of erasure coding, “secret sharing”. From the title, it is easy to see how the 2 are associated: you probably have an algorithm for splitting knowledge up amongst 9 nodes such that 5 of 9 nodes are wanted to recuperate it however 4 of 9 cannot, then one other apparent use case is to make use of the identical algorithm for storing non-public keys – break up up your Bitcoin pockets backup into 9 components, give one to your mom, one to your boss, one to your lawyer, put three into just a few security deposit containers, and many others, and in case you neglect your password then you’ll ask every of them individually and likelihood is at the very least 5 gives you your items again, however the people themselves are sufficiently far other than one another that they are unlikely to collude with one another. This can be a very reputable factor to do, however there may be one implementation element concerned in doing it proper.

The difficulty is that this: though 4 of 9 cannot recuperate the unique key, 4 of 9 can nonetheless come collectively and have numerous details about it – particularly, 4 linear equations over 5 unknowns. This reduces the dimensionality of the selection area by an element of 5, so as an alternative of two256 non-public keys to look via they now have solely 251. In case your key’s 180 bits, that goes all the way down to 236 – trivial work for a fairly highly effective pc. The way in which we repair that is by erasure-coding not simply the non-public key, however quite the non-public key plus 4x as many bytes of random gook. Extra exactly, let the non-public key be the zero-degree coefficient of the polynomial, choose 4 random values for the subsequent 4 coefficients, and take values from that. This makes each bit 5 instances longer, however with the profit that even 4 of 9 now have the whole selection area of two180 or 2256 to look via.

Conclusion

So there we go, that is an introduction to the facility of erasure coding – arguably the only most underhyped set of algorithms (besides maybe SCIP) in pc science or cryptography. The concepts right here primarily are to file storage what multisig is to sensible contracts, permitting you to get the completely most potential quantity of safety and redundancy out of no matter ratio of storage overhead you might be keen to just accept. It is an strategy to file storage availability that strictly supersedes the chances provided by easy splitting and replication (certainly, replication is definitely precisely what you get in case you attempt to apply the algorithm with a 1-of-n technique), and can be utilized to encapsulate and individually deal with the issue of redundancy in the identical approach that encryption encapsulates and individually handles the issue of privateness.

Decentralized file storage remains to be removed from a solved downside; though a lot of the core know-how, together with erasure coding in Tahoe-LAFS, has already been carried out, there are actually many minor and not-so-minor implementation particulars that also have to be solved for such a setup to truly work. An efficient fame system will probably be required for measuring quality-of-service (eg. a node up 99% of the time is price at the very least 3x greater than a node up 50% of the time). In some methods, incentivized file storage even relies on efficient blockchain scalability; having to implicitly pay for the charges of 343 transactions going to verification contracts each hour is just not going to work till transaction charges turn out to be far decrease than they’re at this time, and till then some extra coarse-grained compromises are going to be required. However then once more, just about each downside within the cryptocurrency area nonetheless has a really lengthy approach to go.

[ad_2]

Source link